AI Security Risks in Legal Services: Privacy & Regulation

Security risks on legal AI solutions have become a buzzword in the rapidly evolving world of technology. As businesses increasingly adopt Artificial Intelligence (AI) to streamline their operations and deliver innovative services, they must also grapple with the security risks these technologies present. This article provides an in-depth exploration of these risks, offering insights into how they can be managed and mitigated.

1. The Privacy Paradox in the Age of AI: Unraveling the Complexities

The advent of AI has ushered in an era of unprecedented possibilities, but it has also introduced significant security risks. One of the most pressing concerns is privacy. As AI systems become more sophisticated, they require vast amounts of data to function effectively. This data often includes sensitive information, making privacy a paramount concern in the realm of legal AI solutions.

AI’s potential to revolutionize everything comes with serious privacy risks as the complexity of algorithms and opacity in data usage grow. These systems often operate as “black boxes,” with their inner workings hidden from view. This lack of transparency can lead to breaches of privacy, as users are often unaware of how their data is being used.

For instance, large language models trained on legal corpora have been shown to inadvertently reproduce fragments of client-privileged communications, potentially exposing confidential settlement terms or case strategies that should remain protected under attorney-client privilege.

Moreover, the global nature of the internet means that data can be transferred across borders with ease. This raises questions about jurisdiction and the applicability of privacy laws, further complicating the privacy landscape. Therefore, when presenting potential customers with legal AI solutions, it’s crucial to address these privacy concerns upfront and demonstrate a commitment to safeguarding data privacy in AI legal services.

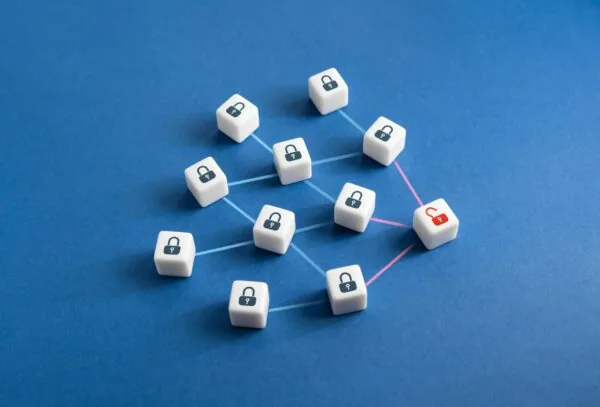

2. The Exploitation of Non-sensitive Data: Hidden Security Risks

While much attention is given to the protection of sensitive data, non-sensitive data can also be a target for exploitation. In the context of AI, seemingly innocuous information can be used to infer sensitive details about individuals or organizations. This is particularly relevant in the realm of legal AI solutions, where data is often highly sensitive.

Fraudsters can exploit seemingly non-sensitive marketing, health, and financial data, using advanced AI algorithms to uncover patterns and insights that can be used for malicious purposes. This underscores the need for robust security measures to protect all types of data, not just those that are traditionally considered sensitive.

For example, metadata from legal AI platforms — such as query frequency on specific regulatory topics or research spikes around certain companies — can reveal confidential litigation strategies without anyone accessing a privileged document. This makes metadata governance and access logging just as critical as encrypting the underlying case files.

3. Legal and Ethical Considerations: Navigating the Complex Landscape

The legal and ethical implications of AI are vast and complex. As AI systems become more integrated into our daily lives, they raise a host of legal and ethical issues, including:

- Privacy and surveillance concerns

- The potential for bias or discrimination

- Questions about accountability and transparency

When it comes to legal AI solutions, these issues become even more critical. AI systems used in the legal sector often handle highly sensitive information and can have significant impacts on individuals’ lives. Therefore, it’s crucial for providers of these solutions to take a proactive approach to address these legal and ethical concerns.

Key regulatory frameworks now shape this landscape directly. The GDPR imposes strict requirements on data processing, including provisions on automated decision-making under Article 22, while the EU AI Act classifies AI systems by risk tier and imposes heightened obligations on high-risk applications — a category covering many legal AI use cases. Together, these frameworks require providers to demonstrate not only data protection but also algorithmic accountability and human oversight.

4. The Role of Big Data and Machine Learning: A Double-Edged Sword

Big data and machine learning are at the heart of many AI systems. These technologies enable AI systems to learn from vast amounts of data and make accurate predictions. However, they also introduce new security risks.

For instance, successive algorithms can be applied to new data as it is generated, potentially leading to unauthorized access or misuse of data. This is particularly concerning in the context of legal AI solutions, where the data involved is often highly sensitive.

Beyond unauthorized access, the training process itself introduces vulnerabilities such as data poisoning, where adversaries inject corrupted data into training sets to subtly skew outputs — causing a model to misclassify contract clauses or overlook regulatory red flags. Mitigating these threats requires:

- Rigorous data provenance tracking

- Adversarial testing of model outputs

- Continuous model monitoring and validation

5. The Risks of Decision-Making Using AI: Ensuring Fairness and Transparency

AI systems are increasingly being used to make decisions that affect individuals’ lives. While these systems can greatly improve efficiency and accuracy, they also present risks. One of the key concerns is the potential for unfairness or bias in AI decision-making processes.

AI algorithms are only as good as the data they are trained on, a concern closely related to mitigating LLM hallucinations. If this data is biased, the decisions made by the AI system will also be biased. This can lead to unfair outcomes, particularly in sensitive areas such as legal decision-making.

Moreover, AI decision-making processes are often opaque. This lack of transparency can make it difficult for individuals to challenge decisions made by AI systems, raising concerns about accountability and due process. The GDPR reinforces this concern by granting individuals a “right to explanation” when subject to solely automated decisions with legal effects, meaning legal AI providers must build interpretability into their systems from the outset.

Therefore, when developing and implementing legal AI solutions, it’s essential to ensure that these systems are transparent and fair. This includes:

- Using unbiased training data

- Regularly auditing AI systems for bias

- Providing clear explanations for AI decisions

6. The Need for AI Regulation: Balancing Innovation and Risk

As AI continues to evolve and its applications expand, the need for regulation becomes more apparent. AI leaders and policymakers are increasingly discussing the risks that AI poses to society and the need for regulatory measures to mitigate these risks.

However, regulating AI is a complex task, as the AI Act demonstrates. It requires a delicate balance between fostering innovation and protecting individuals and society from potential harm. This is particularly true in the context of legal AI solutions, which must navigate a complex web of legal and ethical considerations.

Regulation can play a key role in addressing the security risks associated with legal AI solutions. By setting clear standards and guidelines, regulation can help ensure that these technologies are used responsibly and ethically.

The EU AI Act, which entered into force in August 2024, illustrates this concretely: prohibitions on unacceptable-risk systems already apply, while obligations for high-risk AI — including many legal applications — take effect through 2026 and 2027. Legal AI providers serving European markets must prepare for conformity assessments and transparency requirements, and this momentum is influencing jurisdictions beyond Europe as well.

Conclusion

In conclusion, security risks on legal AI solutions is a multifaceted issue that requires careful consideration. As AI continues to transform the legal sector, it’s crucial to understand and address these risks. By doing so, we can harness the power of AI to improve legal services while also protecting the rights and interests of individuals and society.

Ready to automate your legal workflows?

Discover how e! can transform your legal operations with no-code automation.