Small Language Models in LegalTech: Part 1 – SLM vs LLM

What Are Small Language Models (SLMs)?

Small Language Models (SLMs) are compact AI language models designed to process and generate text with far fewer computational resources than the giant models behind tools like ChatGPT.

In practice, SLMs typically operate with fewer than 30 billion parameters, whereas large language models (LLMs) like GPT-4 can have hundreds of billions. By being smaller, SLMs focus on specific tasks or domains – for example, analyzing legal documents – rather than trying to cover the breadth of all human knowledge.

This focused approach means SLMs can often achieve high efficiency and speed while maintaining strong performance on their intended tasks. To see how SLMs compare in practice, our guide on which AI models to use covers the major options. Developers often train SLMs on carefully curated, domain-specific data (such as legal texts) instead of using the massive, diverse datasets they use for LLMs. This specialization enables them to excel in niche applications with faster responses and lower resource needs, making them attractive for legal settings.

In summary, developers design an SLM as a slimmed-down language model optimized for efficiency, enabling it to work quickly, on-premises, and with targeted legal knowledge in the context of legal practice – a sharp contrast to the cloud-dependent behemoths that serve as general-purpose LLMs.

This article is part of a multi-part series dedicated to introducing Small Language Models (SLMs) and their growing impact on legal technology. Throughout this series, we’ll dive into the essential aspects of SLMs for legal professionals—exploring their advantages, limitations, best practices, and real-world applications for law firms and in-house counsel.

In this first installment, we’ll cover the basics:

- What are Small Language Models (SLMs)?

- How do SLMs differ from Large Language Models (LLMs)?

- Why are SLMs becoming a game changer in the legal sector?

Each upcoming episode will focus on a specific topic surrounding SLMs, providing you with in-depth insights to help you make informed decisions about adopting AI in your legal practice. Stay tuned for more practical guidance and case studies in the weeks ahead.

Key Differences: Small Language Models (SLMs) vs. Large Language Models (LLMs)

While both SLMs and LLMs are AI models that generate and interpret human language, there are key differences in scale, focus, and practicality that set them apart:

Key Differences:

- Size and Parameters: SLMs are “small” by design – usually in the millions to low tens of billions of parameters – whereas LLMs boast hundreds of billions or even trillions. For instance, an SLM might have 7B parameters targeted at legal text processing, whereas GPT-4 (an LLM) uses a vastly larger network. This lean size makes SLMs lighter and faster.

- Specialization vs. Generalization: SLMs are typically trained on curated, domain-specific data, making them experts in a niche (e.g., contract language, GDPR regulations). Developers train LLMs on expansive internet-scale data, making them generalists capable of answering a wide range of questions, though not always with domain-specific depth. In practice, an SLM might more reliably interpret a complex contract clause, while an LLM might know a bit about many topics (legal and non-legal).

- Speed and Efficiency: SLMs offer snappier response times (milliseconds) and use far less memory and energy. LLMs, being larger, can be slower – sometimes taking seconds or more to respond – and consume much more computational power per query. The difference has been likened to a compact car versus a heavy truck: one accelerates quickly and fits in small spaces (SLM), the other carries more but at higher cost and slower maneuvering.

- Cost and Accessibility: SLMs are cheaper to train and operate. Training an SLM on a legal dataset might cost on the order of thousands of dollars, whereas a state-of-the-art LLM can cost millions to develop. For law firms or legal departments, SLMs put AI within reach without the hefty pay-as-you-go API fees that often come with large third-party models. The energy usage is also much lower for SLMs, aligning with firms’ cost-saving and sustainability goals.

- Privacy and Compliance: Perhaps most crucially for legal applications, SLMs can be deployed behind a firm’s firewall, keeping sensitive data in-house. Large cloud LLMs typically send data off-site for processing, raising concerns about client confidentiality and data protection. SLMs, by contrast, allow firms to maintain complete control over data since the models can run locally. This key difference means SLMs can more easily meet strict privacy requirements such as EU’s GDPR, since no personal data needs to leave the organization’s servers.

Ideal Applications Differ for SLMs and LLMs

It’s worth noting that these differences also mean SLMs and LLMs each have their ideal uses. LLMs still have an advantage in tackling very broad or complex linguistic tasks (thanks to their sheer scope of training), whereas SLMs shine for specialized, secure, and speedy applications. As one analysis put it, teams should “choose SLMs when the use case demands specialized performance, local deployments or complete control over data”, and opt for cloud LLMs only when broad, versatile intelligence is needed without strict privacy or latency requirements.

Why Are Small Language Models (SLMs) Trending in LegalTech?

Privacy and data security concerns are a driving force behind the SLM surge in the legal industry.

Law firms and in-house counsel handle troves of confidential client information, and many are understandably wary of sending this data to cloud-based AI services.

In fact, a 2023 Thomson Reuters survey found that a significant number of law firms have restricted or banned the use of tools like ChatGPT, with lawyers citing that they “do not fully trust … particularly the public-facing ChatGPT … with confidential client data”.

This mistrust of big cloud AI, coupled with stringent regulations like the EU’s GDPR and growing security risks in legal AI, has created a demand for AI solutions that keep data on-premises. SLMs fulfill this need by allowing legal teams to run AI models behind their own firewall or in a private cloud, ensuring sensitive data (like draft contracts or case files) never leaves their control.

European regulators have even highlighted this advantage: the European Data Protection Board noted that using SLMs locally can enhance privacy by reducing reliance on centralized LLMs, a clear nod to the technology’s fit with GDPR compliance.

Affordability and accessibility are another reason SLMs are gaining traction in LegalTech.

Traditional large AI models often come with pay-per-use fees or expensive infrastructure requirements that small and mid-sized law firms find prohibitive. By contrast, SLMs are cost-effective – both to deploy and to run – putting advanced AI capabilities within reach for firms that don’t have Silicon Valley-sized tech budgets.

For example, many firms can run an open-source 7B-parameter model for legal document review on standard hardware they already own, paying only a fraction of the cost compared to using a massive API-based model. This lower cost of entry means even boutique firms or solo practitioners can experiment with AI-powered legal drafting or research tools. As a result, SLMs are democratizing legal AI, allowing wider adoption across the industry.

Customization and control over the AI’s knowledge base also make SLMs appealing.

Legal work often involves highly specific domains (e.g. maritime insurance law, or the internal policies of a corporation) that generic models might not grasp well.

A firm can fine-tune SLMs on its own corpus of documents – whether past case briefs, contract templates, or regulatory filings – to yield a model that speaks the firm’s language. Typical fine-tuning sources include:

- Past case briefs and legal memoranda

- Contract templates and clause libraries

- Regulatory filings and compliance documents

This targeted training can quickly produce more accurate and relevant outputs for those niche tasks on a small model.

Lawyers appreciate that an SLM tuned to their needs will, for example, use the preferred clause language from their own contract library, and will be less likely to “hallucinate” irrelevant or incorrect citations. In other words, focus breeds reliability. A LegalTech provider emphasizes that in law, “accuracy matters just as much as, if not more than, scale”, and smaller specialized models avoid many of the pitfalls (like factual errors) that plague their larger counterparts.

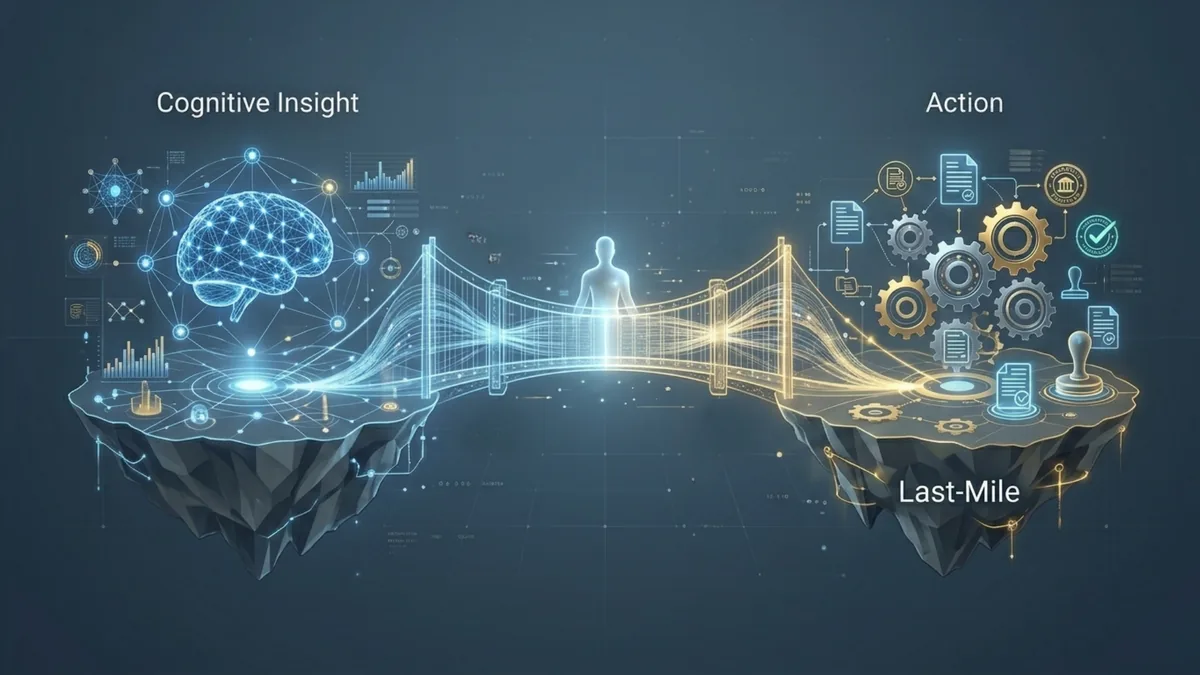

Finally, SLMs are trending because of their fast performance and seamless integration into legal workflows.

These models can deliver answers with near-zero latency, which is critical during time-sensitive legal tasks like contract negotiation or court filings.

One industry expert noted that SLMs now approach “Big Law research performance” – meaning they can sift through and analyze legal information almost as effectively as top lawyers – but at “minuscule cost and near-zero latency.”

Whether it’s an associate getting a quick summary of a 100-page contract or an in-house counsel running a compliance checklist, SLMs provide results in a snap. Moreover, they can be integrated into familiar tools (such as Microsoft Word or document management systems), often via on-premises plugins, so that lawyers can leverage AI assistance without changing platforms or compromising on data security.

The combination of client confidentiality, cost-efficiency, and custom-tailored speed explains why SLMs have become one of the hottest LegalTech trends, particularly in Europe where data sovereignty is paramount.

Ready to automate your legal workflows?

Discover how e! can transform your legal operations with no-code automation.